The State of AI Fraud Should Alarm Every Enterprise Leader

As AI technology continues to advance, the threat of AI-driven fraud grows. This article delves into the rising concerns of AI fraud and its implications for enterprises.

Over the past few years, we have seen a drastic rise in the field of AI. Different companies all rallying to see who will emerge as a superior in this race. This comes as a good thing for consumers and end-users as we have seen the revolution these advancements have brought in different industries.

AI has not only streamlined different operations across major fields but it has also enhanced decision-making processes. Even though it presents many pros, AI has also introduced new and growing threats of AI fraud as it's called by many.

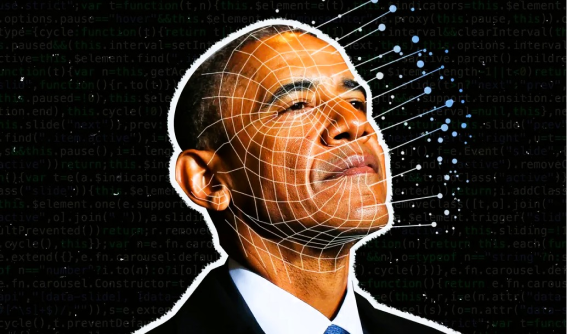

Understanding AI Fraud

AI fraud is the use of artificial intelligence to deceive people, businesses, and organizations for financial gain, spread wrong information, or manipulate. It differs from traditional fraud as it leverages machine learning models to bypass security measures.

Types of AI Fraud

- Deepfakes: AI-generated videos used to manipulate, spread misinformation, or impersonate others.

- Voice Cloning: AI mimics an individual's voice to deceive others.

- AI-Generated Phishing: Using deep learning to craft highly personalized phishing emails.

- AI-Powered Misinformation: Manipulating public perception with AI-driven content.

AI Threat to Enterprises

AI-driven fraud poses a critical threat to enterprises, with industries like finance, government, and healthcare being the most affected. A notable case in 2019 saw a UK energy company lose $243,000 due to a deepfake audio attack.

How to Safeguard Against AI Fraud

- Use Deepfake Detection Tools: Platforms like deeptrack analyze media inconsistencies to verify authenticity.

- Employee Training: Conduct awareness programs on recognizing AI-based fraud and verifying communications.

- Strengthen Cybersecurity: Implement multi-factor authentication, encrypt data, and monitor threats.

- Leverage AI for Fraud Prevention: AI models can analyze behavioral patterns to detect anomalies in transactions and communications.

Conclusion

AI fraud is no longer a future threat—it is happening now. Enterprise leaders must act proactively, implementing AI-powered security solutions to safeguard against evolving threats.